AWS offers a rich set of tools and dashboards to understand the billing data of its services from tracking, visualizing and alerting, many use cases are covered with AWS native tools. However, in reality, many companies and operation teams have very custom use-cases for monitoring and alerting, which might have to take billing data into account. Some might:

- not be in a comfortable position to focus solely on one single cloud provider and have to unify different billing data sources or formats

- want to use their existing monitoring and alerting system tailored to their needs to also be able to process billing data

- want to train their very own machine learning application to find possible optimizations in their own, or in billing data of their customers.

Fortunately, AWS does offer to export their billing data in a plain CSV format. This enables us to create a tailored approach to process AWS billing data. This article will share some insight, how one could approach this issue to process AWS billing records, transform these as needed and push these to influxDB, a popular time-series database, and to graph these values using Grafana.

Identify and understand the source fields of the cost reports

Before we can start processing CSV data, we must understand the meaning of the fields. Be aware that AWS itself does offer a legacy format, called “Detailed billing report” (DBR) and the current format “Cost and Usage Report” (CUR). The CUR itself can contain more than 120 fields in its CSV, having the documentation at hand is definitely helpful: cost-reporting. If your goal is to have a detailed business intelligence report, you will need a data warehousing application. In this case, these reports can be imported and queried by Amazon Redshift billing with AWS Redshift. But since we want to build an alerting tool and operations dashboard, we are going to trim the fields down significantly to not kill our monitoring infrastructure. Interesting values to consider from the CUR are:

- lineItem/LineItemType: the CUR does not only contain on-demand costs, but also tax, refunds, upfront fees and more. If you only need on-demand costs and leave taxes for the business controlling team, filter this field for the value ‘usage’

- lineItem/ProductCode: the product code will note the used AWS service, f.e. “AmazonEC2”

- lineItem/UsageAccountId: the account which used the resource

- lineItem/UnblendedCost: the cost for the particular item. Depending on your usage case, there are also the fields UnblendedRate and UnblendedUsage, and BlendedCosts/Rates, which provide average rates for organziations. When in doubt, consult the documentation.

- identity/TimeInterval: the reports do not give a timestamp when the billed event occurred, but the interval on an hourly basis. We’ll use this interval and extract a timestamp, this will be enough for a dashboard. To track the live time of a resource, the field lineItem/UsageStartDate and lineItem/UsageEndDate might be more suitable

Planning the data model

InfluxDB is the database of our choice in this example, to store the data and serve as a backend for our visualization and monitoring. Another popular choice for this role would be Prometheus. InfluxDB divides data into two categories, tags and fields.

Tags are providing an index and partitioning data sets, it’s mostly intended to contain metadata and structure your data. The ‘actual’ data will be contained in fields. A data set with fields and tags will also need a measurement name to group a series of values and a timestamp for each data point since InfluxDB is a time-series database.

Selecting the right amount of data for a monitoring probe is difficult to judge. An optimistic data analyst might want to gather all data and formulate the use-cases later. However, an operations engineer will rather want his monitoring infrastructure to be as most ‘real’ time as he can achieve, where smaller data-sets are definitely easier to handle and less wasteful.

Extract, Transform, Load

Having multiple different input sources and possibly multiple different monitoring use-cases, we will need to transform the data. For an incoming CSV, we need to identify which csv-fields to use as tags and which as fields. The basis will be a custom schema:

csv_format = {

'dbr-cost-allocation': {

'tags': [

'productcode',

'linkedaccountname'

],

'fields': [

'totalcost'

],

'time': 'invoicedate'

},

"cur_report": {

'tags': [

'lineitem/lineitemtype',

'lineitem/productcode',

'lineitem/usageaccountid'

],

'fields': [

'lineitem/unblendedcost'

],

'time': 'identity/timeinterval'

},

....Each section defines a dedicated InfluxDB measurement, identifying which CSV values should be used as timestamp, field or tag. All other CSV values will be discarded. The examples above are already highlighting the differences between the legacy DBR format and the newer CUR format, although both are native AWS billing records. To unify both and to be able to graph unified dashboards, we’ll use another table to rename fields where necessary:

map_fields = {

'linkedaccountname': 'accountname',

'lineitem/lineitemtype': 'chargetype',

'lineitem/productcode': 'productcode',

'lineitem/unblendedcost': 'totalcost',

'lineitem/usageaccountid': 'accountname'

}An attentive reader will stumble across one line in the listing above: unblendedcost will be renamed totalcost, although it was stated above that unblendedcosts are costs of very particular items, in any case not a total by any means. This will only make sense with the next section:

reduce_values = {

....

"cur_report":

"fields": (add, 'totalcost')

...(Where map and reduce means higher-order functions: Fold_(higher-order_function, not Googles MapReduce in particular.)

The fields noted above will be combined with every other entry, which has the same primary key, using the noted function. In this case, all unblendedcosts (already renamed in the listing above!) will be added up to calculate the total costs. InfluxDB considers a unique key the product of tags x measurement x timestamp. Another possible use-case could be to calculate the most expensive service usage, by using a ‘max’ function, instead of an ‘add’ function.

Now to combine all of the above, we can build an algorithm to transform each CSV and build a custom data model, shown as pseudocode:

for csv in csv_list:

reduce(rename(filter(format, csv)))

# for each csv row, apply a filter/schema, rename the fields and

# finally sum fields So now we have a plan, but we still need to:

Fetch reports from S3

One major feature of AWS and other cloud providers is the ease of use and the ability to do nearly every action using well documented (and implemented!) APIs. For everything AWS related, the one-stop-shop for python is Boto3: boto3.amazonaws.com

To download a csv to its as easy as:

s3client.download_file(Bucket=bucket, Key=file_key, Filename=local_filename)… when we would not have to account for different file names, locations and formats. But we can use the same schema for the file retrieval as we did for the data model. First, detect if a file in the s3 bucket is a billing report by matching the filename against a pattern, iterate until all files are scanned and return the found list if finished.

regex_pattern = {

'3rd-party-preprocessed': '^\d\d\d\d-\d\d-\d\d.zip$',

#will match f.e.: 2019-01-01.zip

'native_dbr': '^\d\d\d\d\d\d\d\d\d\d\d\d-aws-cost-allocation-.*.csv',

#will match <AWS-account-id>-aws-cost-allocation-2019-01-01.csv

'cur_report' = "{}/{}/{}/{}-Manifest.json".format(prefix,report_name,billing_period_regex,report_name)

# will match CUR report path using the regex:

# billing_period_regex = '\d\d\d\d\d\d\d\d-\d\d\d\d\d\d\d\d'

}

...

finished = False

response = s3client.list_objects_v2(Bucket=bucket)

while not finished:

for c in response['Contents']:

if re.match(regex_pattern[csv_formatting], c['Key']):

result.append(c['Key'])

#more items to process?

finished = not response['IsTruncated']

if not finished:

logging.info("Fetching next 1000 items")

continue_token = response['NextContinuationToken']

response = s3client.list_objects_v2(Bucket=bucket,ContinuationToken=continue_token)

return resultThe same as matching filename patterns will be likely needed for all the other details a programmer and/or data engineer highly enjoys: timestamps and time zones, file encoding, CSV styles (it’s not always comma-separated…) and more.

You might, for example, encounter a CSV formatted using ; as a separator, using Unix line-terminator and latin-1 encoding, while the AWS native one would use windows style line terminator, separate fields with , and utf-8 encoding. The following listing illustrates this issue:

if '3rd-party-csv_file' in filename:

csv_file = file_obj.readlines()

csv_decoded = [ x.decode('latin-1') for x in csv_file]

dialect = csv.unix_dialect

dialect.delimiter = ';'

csv_reader = csv.DictReader(csv_decoded, dialect=dialect)For someone implementing such an approach: always remember Bobby Tables –

Graph AWS Billing Data

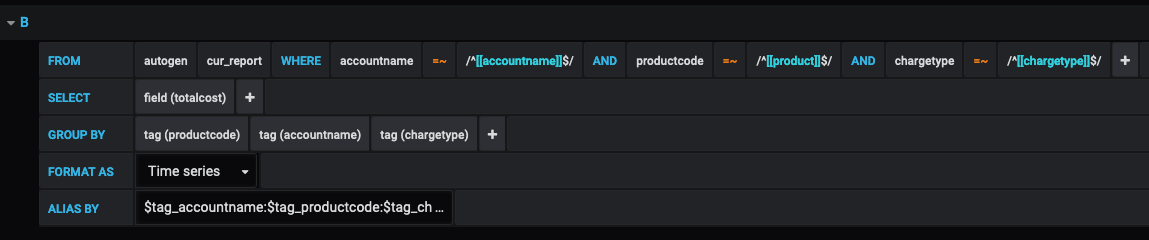

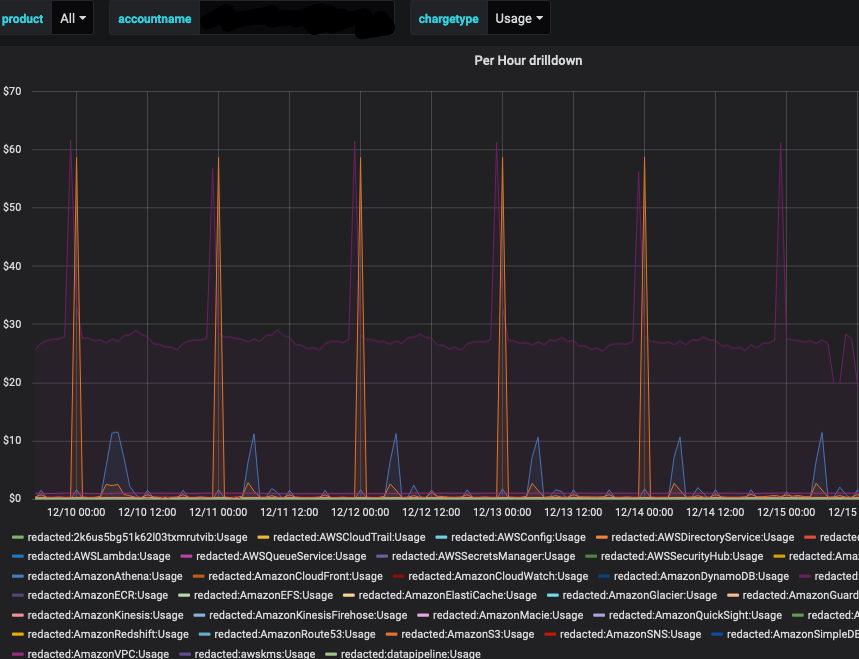

After the data has been discovered, downloaded, extracted, formatted, renamed, added up and pushed to influxDB, we can finally build fancy dashboards and fierce alerts. This, however, is an art in and of itself and might be covered in-depth in a different blog article. However, since this article is titled Graphing AWS Billing Data I will show you a simple dashboard which uses the data model suggested above. The following query against influxDB in a Grafana Panel:

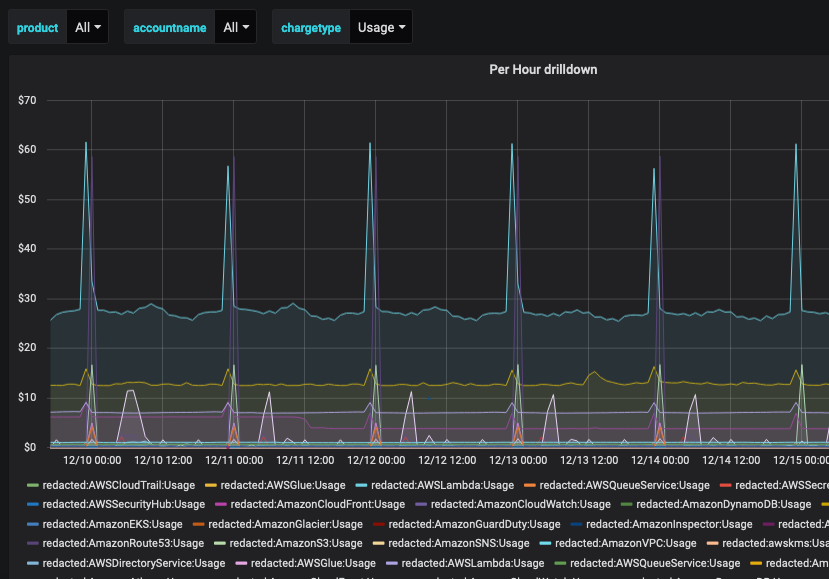

Will give you a nice Graph, already capable of drilling down by either product, account, or charge type. As seen below:

General Dashboard

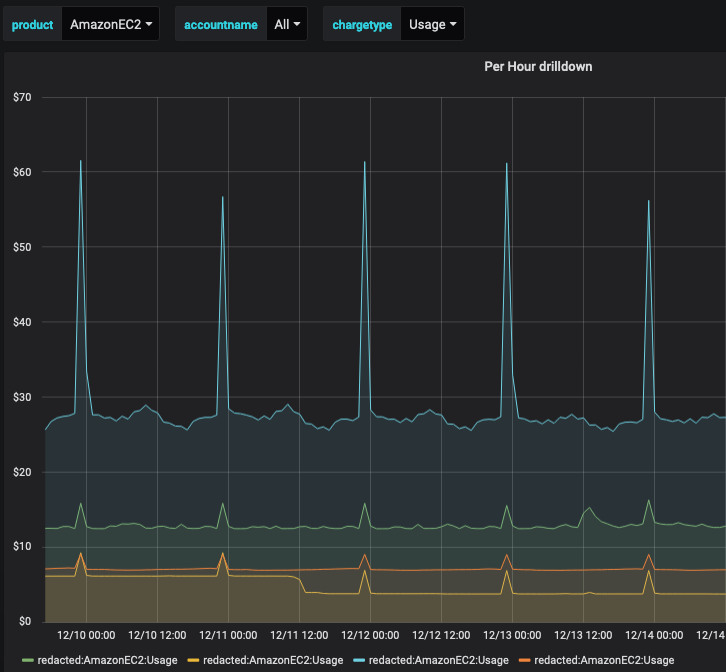

Same by Product:

By Account:

From here you can start to graph your very own visualization for the KPI you need. The spikes you see in the graphs are services which are billed on a daily usage basis, f.e. S3. If you only want the per-day total costs, this could be displayed as a mean per day to flatten the spikes.